Testing With Python: First-Timer Perspective

Certainly nothing groundbreaking here, just my experience diving into the world of code testing.

Code Testing

Any book, blog, or article about software development is going to tell you that you should always write tests for your code. Even more so, you should write the tests before you ever even write a line of actual code. I had always thought test-driven development was something for hardcore devs who were building major applications and doing work in CI/CD pipelines. I thought testing was way too over the top for the scripts that I normally write and had only ever written any tests in a lab environment. Up to this point, testing for me was a lot of run > error > Ctl^C > edit code > run again.

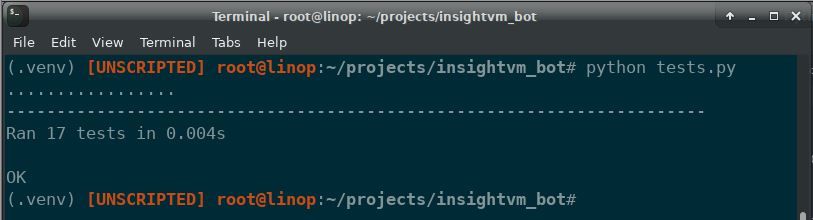

While porting my Nexpose Slackbot (waiting on final approval to open source) from Ruby to Python (more on that another time) I decided to try to be as "Pythonic" about my code as possible including writing tests. Admittedly, I did write my tests after I had a working bot instead of writing them before coding. I mostly went down this path just to prove to myself that I could. The awesome part though is that I did indeed find some bugs in my code that my live testing had not revealed.

Because of this, though very minor, I am now a true believer in testing and why you should write tests before you start writing actual code. A few lessons learned are at the end of this post.

Unit Testing vs. Integration Testing

Any developer will already know exactly what this means but the overall concept was somewhat new to me.

Unit Testing

Unit testing involves testing very small portions of your code, usually a single function (or maybe less in some cases). I took this to mean testing the various cases I thought my code might be exposed to. Specifically a normal expected case, a normal but incorrectly formatted case, and a completely invalid case on a per function basis.

Integration Testing

Integration testing involves more in depth analysis of how the script/application is going to interact with external sources or even with other functions within the script/application. I found that two approaches can be used here; dummy data and mock ups.

Dummy data is exactly what it sounds like; create or generate data that is the same/similar to that the code will be exposed to. I tried at first to create the data but found that I got a much more realistic and simpler set of data when I just decided generate and capture some sample data to use.

What I did not know is that there are outstanding folks out there who create open-source mock-ups of popular applications to be used for integration testing. A teammate pointed me to a GitHub project that is a complete mock-up of Slack to be used for integration testing; https://github.com/Skellington-Closet/slack-mock. These mock-ups go a step beyond dummy data and actually allow for real time interaction with a simulation of the true endpoint. Another options of course is to build your own mock-up...maybe someday.

Python Unit Test

The de facto library for Python testing is the unittest library. Using the library is fairly simple for basic use cases. Simply create a sub-class using the unittest.TestCase class and then create your tests by creating functions within that class. The unittest.TestCase class includes a number of assert... methods to create the test cases with. All of them are defined in the documentation but they are split up for some reason in the online docs. I have put a list of them for reference at the bottom (also so I don't have to keep scrolling through the documentation).

In my case, I was able to use testing to determine that my regex for extracting IPs and hostnames from a Slack message was not adequate. The regex did capture 'normal' use cases but there were some edge cases that caused issues. I only discovered this from writing these test, manual testing had not revealed the issue. The results was updated regular expressions that are much more reliable. Here is an example of using Unit Test for this purpose:

import unittest

# Custom library

import helpers

# Class that is sub-classed from unittest

class ExtractIPsTest(unittest.TestCase):

def testIPRegexValidIp(self):

self.assertListEqual(helpers.extract_ips('192.168.1.1'), ['192.168.1.1'])

def testIPRegexInvalidIp(self):

self.assertListEqual(helpers.extract_ips('192.168.1.321'), [])

def testIPRegexOtherString(self):

self.assertListEqual(helpers.extract_ips('test string'), [])

Lessons Learned

A few things stuck out at me as lessons learned from my first "testing" adventure.

- The test cases in your test class must start with the word

test. I wrote a few tests that just would not run for some reason, only to find out this little nugget of info. - Test-driven development really does force you to modularize your code. I found a few cases where I was trying to do too much with a block of code that made testing very difficult and convoluted. In the future (by writing tests first) I plan to really make an effort to keep my code blocks small so facilitate easier, faster, and more accurate testing.

- There is a challenge in writing tests in that you have to be careful not to write your test so that your code passes. This is easily solved by writing tests first. In my case though, writing tests after the fact, I had to be very careful to truly write the tests for my requirements and not just so the tests would pass.

- Writing tests is actually kind of fun. There is an additional level of confidence that you gain from knowing that you wrote tests for your code and that they accurately demonstrate if your code is valid or not.

- There is a ton more work for me to do with testing including: using a mock-up to do full integration testing and using

unittest's setup/teardown functionality to dynamically build more complicated, realistic, and valid test data

Unit Test Cases

A simpler reference (to me) of test checks in unittest. Deprecated methods omitted, documentation description summarized in some cases or omitted if self explanatory.

assertAlmostEqual(a, b) - Check if equal that allows for rounding, a delta can also be defined

assertCountEqual(a, b) - Compares unordered sequences for equal elements

assertDictContainsSubset(subset, dict) - Checks whether dictionary is a superset of subset.

assertDictEqual(a, b)

assertEqual(a, b)

assertFalse(expr)

assertGreater(a, b)

assertGreaterEquala(a, b)

assertIn(a, b)

assertIs(a, b)

assertIsInstance(a, b)

assertIsNone(a, b)

assertIsNot(a, b)

assertIsNotNone(obj)

assertLess(a, b)

assertLessEqual(a, b)

assertListEqual(a, b)

assertLogs(logger, level) - Checks if a log message was generated

assertMultilineEqual(a, b) - Checks equality of multiline strings

assertNotAlmostEqual(a, b) - See AlmostEqual

assertNotEqual(a, b)

assertNotIn(a, b)

assertNotIsInstance(a, b)

assertNotRegex(test, regex) - Fail the test if the text matches the regular expression.

asertRaises(expected_exception) - Fails unless the expected exception is raised.

assertRasiesRegex(expected_exception, regex) - Checks for a specific message in the raises exception.

assertRegex(text, regex) - Fails the test if the text does not match the regex

assertSequenceEqual(a, b) - Similar to assertCountEqual but for ordered lists.

assertSetEqual(a, b)

assertTrue(expr)

assertTupleEqual(a, b)

assertWarns(expected_warning) - Fail unless a warning of class warnClass is triggered

assertWarnsRegex(expected_warning, regex) - Similar to RaisesRegex but for warnings.